The proliferation of cameras has allowed many of us to carry these recording devices in our pockets, wherever we go. We are able to record crisp and clear videos on the go just with a click of a button on our smartphones. We have even mounted cameras on drones and other robots, allowing us to see places and perspectives which would be otherwise impossible. The ISRO has successfully landed one such robot, the Pragyan rover, on the surface of the moon. But recording a video is not always a smooth affair. Environmental and instrumental constraints often affect the quality of the video we capture.

One of the main challenges always is obtaining a stable video when recording on the move, especially when shot remotely. Researchers from the Indian Institute of Technology, Bombay (IIT Bombay) have now developed a mathematical algorithm to stabilise videos by removing unintended motions in a video.

“When the camera is mounted on a robot and this robot is moving, I want to capture that movement. Anything extra getting associated with that movement is undesired for me,” remarks Prof. Leena Vachhani, a professor at IIT Bombay and an author of the new research.

Unintended robotic motion can happen due to oscillations caused by execution of new commands, camera platform instability, or the robot’s lack of sophisticated feedback sensors.

There are many methods available to stabilise a video. The easiest would be to make use of a steady platform, like a tripod, a gimbal (a pivoted support that allows the camera to rotate smoothly about an axis) or other devices. These allow for video stabilisation by providing a stable platform for the camera to move without too many perturbations. Drones and robots may incorporate gimbals, which can activate motors to keep the camera steady on each axis. This however adds weight to the setup which is, in some cases, undesirable. Apart from mechanical stabilisers, digital stabilisation makes use of softwares to remove unwanted motions in a video. These often include video enhancing or correction techniques that remove the effects of unintended motions in a video after it is recorded.

Conventional digital stabilisation techniques usually involve using a host of sensors, like inertial measurement units (IMUs), which measure the velocity of the camera and the robot, and hence deduce the unintended motion. If on-board sensors are not viable, they use techniques like feature tracking or motion extraction. Feature tracking involves tracking certain constant features in a scene, like objects or shapes, across successive frames, while motion tracking tracks the movement of the camera, to identify perturbations caused by unintentional motion. Both of these methods are complex processes that require high computational resources. Conventionally, this computation is done at the remote hand-held controller end. This means the video has to be relayed back to the controller, which then processes and finalises the output, making the controllers bulky.

“The software that is used for stabilisation should be light enough, and computationally very inexpensive, so that I can do it with simple microcontrollers and my hand-held remote controller is not bulky,” explains Prof. Vachhani.

For their new method, the researchers at IIT Bombay, aimed to develop a lightweight digital solution that does not rely on sophisticated hardware or feature tracking. They developed a novel method that makes use of a mathematical technique called singular value decomposition. The method involves assigning values called eigenvalues to important characteristics, like shapes and objects within the video. Tracking the changes to these eigenvalues can then be used to calculate the effects of unintended motion on the video.

According to Prof. Vachhani “Eigenvalues capture the main characteristics of the video. Singular value decomposition helps me identify the main eigenvalues and coefficients associated with those values”. The coefficients can then be used to determine if there is any shakiness to the video.

The stabilisation technique relies on the periodic structure of the disturbances. Intentional motion is usually a smooth movement whereas, unintentional motion presents itself as sudden jerks or are wobbly.

“Unintentional motion is going to have a very jittery kind of motion and is associated with periodic signals,” remarks Prof. Vachhani.

Once identified, the periodic signals are filtered out using another mathematical tool called a shape preservation filter. As the name suggests, the filter removes any additional periodicity to the signal, thus preserving the original shape of the signal. Once the periodic signals have been filtered out, the video is considered to be stabilised. The applied method removes only the effects of unintended motion while preserving the scene with a desired motion.

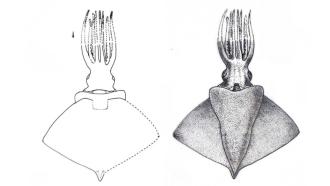

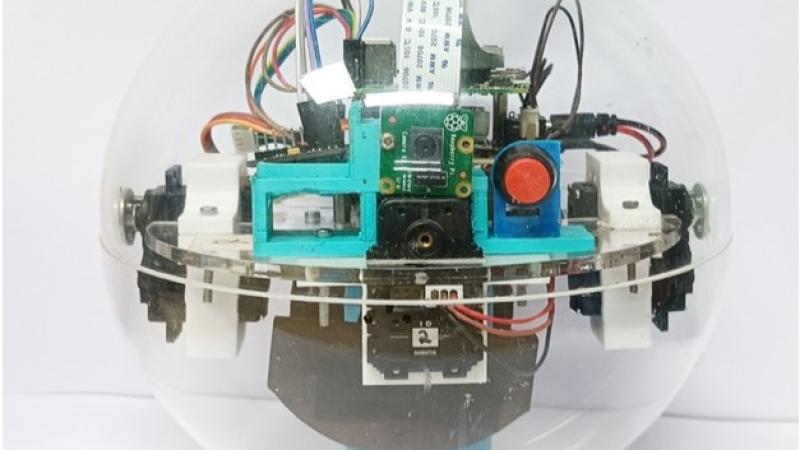

Tests on two types of small robots, a spherical one (about the size of a football) and an Unmanned Aerial Vehicle (UAV), showed promising results. They clearly showed improved video stabilisation, even when presented with challenging environmental constraints and large amplitudes of unintended motion. However, continuing research is necessary to assess the performance of the lightweight stabilisation method in various environments and surveillance applications.

Small robots and drones are increasingly finding uses in both commercial and security tasks, like photography, mapping, visual inspection, target detection, and tracking. The new method could potentially expand applications of small-size robots in remote visual observations by significantly improving the quality of video sequences captured by these bots.