Photo: Vidisha Kulkarni/ Research Matters

Imagine a world where you are in the middle of your daily Bharatnatyam lesson. You dance to the Carnatic music, telling a story through your eloquent expressions and fluid movements. And as you reach the end of your moving performance, you await the comments of your teacher – a computer that critiques your finesse. This future is closer than you think, thanks to Prof. Dinesh Jayagopi and his student Ms. Pooja Venkatesh at the International Institute of Information Technology, Bengaluru, who have developed algorithms to guess the expertise of Bharatnatyam dancers by observing their postures and expressions.

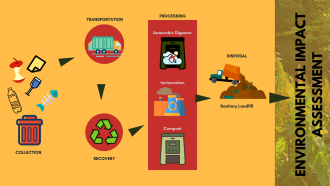

Bharatnayam is one of the most ancient and most expressive Indian classical dance forms. It constitutes challenging poses and dramatic expressions derived from four basic poses known as the Bhangas, and nine expressions known as the Navarasas - each corresponding to a different emotion (Bhava). Prof. Jayagopi and his team have worked on two related tasks - recognizing the poses and the expressions and then training the algorithm to predict the expertise of the dancer using Machine Learning, a concept where given a set of input and output data, the computer learns the relation between the two. First, a set of videos of dancers and the corresponding ratings provided by a dance expert for each of them (the ‘training’ dataset) is provided to the algorithm, so it can ‘learn’ the relation. Then it is given a different set of videos of dancers (the ‘test’ dataset) to check how accurately it can predict the expertise of the dancer.

For recognizing the poses of dancers, the team used Microsoft’s Kinect, a motion-sensing device for the Xbox series, to record videos of dancers and extract their skeletal structure and provide information about the locations of their joints. The dancers performed twenty-two postures, which were all derivatives of the Bhangas. An open-sourced software called OpenNI was used to locate the joints and to calculate the angles made by the joints when the dancers performed the postures. The intuition behind this step was that the values of the joint angles would vary depending on the pose. These joint values were fed to a Machine Learning classifier called Support Vector Machine (SVM), which learnt to match values with the corresponding poses and the algorithm recognized the poses correctly 87% of the time.

In order to analyze expressions, the team chose four expressions – joy (hasya), surprise (adbhuta), sadness (karuna) and disgust (bibhatsa). For this exercise, the videos of the dancers were recorded in a computer webcam and fed to FACET, a software for expression recognition. “FACET takes the videos of the dancers and outputs intensity values of the expressions. Our algorithm uses these values to summarize how expressive a dancer is”, explains Prof. Jayagopi. They found that most of the expressions were recognized with an accuracy of roughly 81% using Logistic Regression, another Machine Learning classifier.

As with the recognition task, the expertise prediction task was also carried out separately for postures and expressions. A dance expert watched the videos of the dancers and rated their postures as either ‘excellent’, ‘good’, ‘satisfactory’ or ‘poor’. It was easier for the algorithm (in this case, Logistic Regression) to differentiate between excellent and poor dancers when compared to differentiating between excellent and good dancers. This is because in the latter case, there was not a huge difference in the poses of the dancers and they were almost equally good.

Similarly, the dancers were rated as ‘excellent’, ‘average’ or ‘poor’ based on their ability to emote. The expertise prediction task in this scenario was more involved; based on the expertise prediction accuracy achieved by the algorithm, the researchers were able to find out which emotions were equally difficult to express for all categories of dancers. A ‘confusion matrix’ was used to quantify which expressions performed by the dancers were being ‘confused’ by the algorithm. For instance, the algorithm had trouble categorizing the dancer as ‘excellent’ or ‘average’ when analyzing ‘joy’. This implied that ‘joy’ was the easiest to perform for all classes of dancers.

This work on recognition and expertise prediction of Bharatnatyam dancers is the first step towards automated training. The applications of such an algorithm is manifold. Prof. Jayagopi has further plans to extend the work to include video datasets and sequential labelling in real time. “The applications of real time recognition would include Bharatnatyam video summarization or video content analysis”, he says. This means that algorithms will eventually be able to analyze the numerous videos on the Internet and automatically tag them as Bharatnatyam videos and even provide a summary of the video content, thereby making video search more accurate. This research certainly does elevate self-learning to a whole new level!